这一篇是官方教程图像分类的最后一部分,介绍了VGG,GoogLeNet以及ResNet

这一章要记得笔记倒是不多,因为paddle的教程已经讲的很明白了

VGG

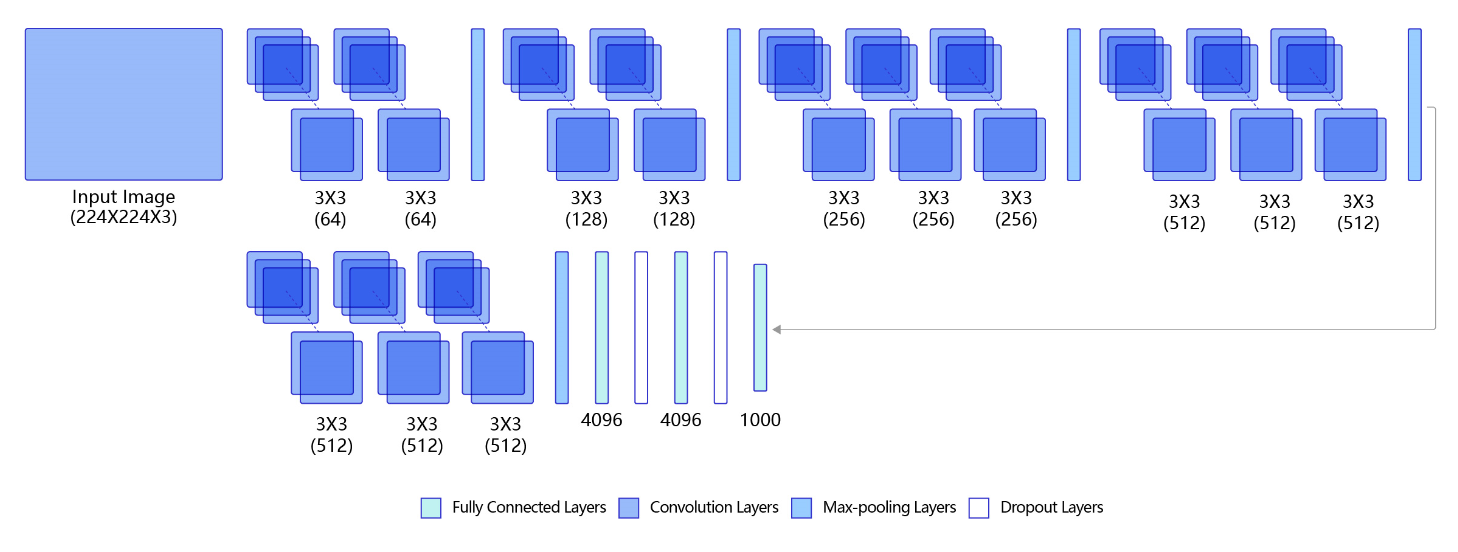

VGG结构相对简单易懂,每一层的卷积并不会改变输出的size,只是增加了channels,池化层每次将size减半,最后得到512×7×7的output,之后经过三层线性得到最终输出。

# -*- coding:utf-8 -*-

# VGG模型代码

import numpy as np

import paddle

# from paddle.nn import Conv2D, MaxPool2D, BatchNorm, Linear

from paddle.nn import Conv2D, MaxPool2D, BatchNorm2D, Linear

# 定义vgg网络

class VGG(paddle.nn.Layer):

def __init__(self):

super(VGG, self).__init__()

in_channels = [3, 64, 128, 256, 512, 512]

# 定义第一个卷积块,包含两个卷积

self.conv1_1 = Conv2D(in_channels=in_channels[0], out_channels=in_channels[1], kernel_size=3, padding=1, stride=1)

self.conv1_2 = Conv2D(in_channels=in_channels[1], out_channels=in_channels[1], kernel_size=3, padding=1, stride=1)

# 定义第二个卷积块,包含两个卷积

self.conv2_1 = Conv2D(in_channels=in_channels[1], out_channels=in_channels[2], kernel_size=3, padding=1,

stride=1)

self.conv2_2 = Conv2D(in_channels=in_channels[2], out_channels=in_channels[2], kernel_size=3, padding=1,

stride=1)

# 定义第三个卷积块,包含三个卷积

self.conv3_1 = Conv2D(in_channels=in_channels[2], out_channels=in_channels[3], kernel_size=3, padding=1,

stride=1)

self.conv3_2 = Conv2D(in_channels=in_channels[3], out_channels=in_channels[3], kernel_size=3, padding=1,

stride=1)

self.conv3_3 = Conv2D(in_channels=in_channels[3], out_channels=in_channels[3], kernel_size=3, padding=1,

stride=1)

# 定义第四个卷积块,包含三个卷积

self.conv4_1 = Conv2D(in_channels=in_channels[3], out_channels=in_channels[4], kernel_size=3, padding=1,

stride=1)

self.conv4_2 = Conv2D(in_channels=in_channels[4], out_channels=in_channels[4], kernel_size=3, padding=1,

stride=1)

self.conv4_3 = Conv2D(in_channels=in_channels[4], out_channels=in_channels[4], kernel_size=3, padding=1,

stride=1)

# 定义第五个卷积块,包含三个卷积

self.conv5_1 = Conv2D(in_channels=in_channels[4], out_channels=in_channels[5], kernel_size=3, padding=1,

stride=1)

self.conv5_2 = Conv2D(in_channels=in_channels[5], out_channels=in_channels[5], kernel_size=3, padding=1,

stride=1)

self.conv5_3 = Conv2D(in_channels=in_channels[5], out_channels=in_channels[5], kernel_size=3, padding=1,

stride=1)

# 使用Sequential 将卷积和relu组成一个线性结构(fc + relu)

# 当输入为224x224时,经过五个卷积块和池化层后,特征维度变为[512x7x7]

self.fc1 = paddle.nn.Sequential(paddle.nn.Linear(512 * 7 * 7, 4096), paddle.nn.ReLU())

self.drop1_ratio = 0.5

self.dropout1 = paddle.nn.Dropout(self.drop1_ratio, mode='upscale_in_train')

# 使用Sequential 将卷积和relu组成一个线性结构(fc + relu)

self.fc2 = paddle.nn.Sequential(paddle.nn.Linear(4096, 4096), paddle.nn.ReLU())

self.drop2_ratio = 0.5

self.dropout2 = paddle.nn.Dropout(self.drop2_ratio, mode='upscale_in_train')

self.fc3 = paddle.nn.Linear(4096, 1)

self.relu = paddle.nn.ReLU()

self.pool = MaxPool2D(stride=2, kernel_size=2)

def forward(self, x):

x = self.relu(self.conv1_1(x))

x = self.relu(self.conv1_2(x))

x = self.pool(x)

x = self.relu(self.conv2_1(x))

x = self.relu(self.conv2_2(x))

x = self.pool(x)

x = self.relu(self.conv3_1(x))

x = self.relu(self.conv3_2(x))

x = self.relu(self.conv3_3(x))

x = self.pool(x)

x = self.relu(self.conv4_1(x))

x = self.relu(self.conv4_2(x))

x = self.relu(self.conv4_3(x))

x = self.pool(x)

x = self.relu(self.conv5_1(x))

x = self.relu(self.conv5_2(x))

x = self.relu(self.conv5_3(x))

x = self.pool(x)

x = paddle.flatten(x, 1, -1)

x = self.dropout1(self.relu(self.fc1(x)))

x = self.dropout2(self.relu(self.fc2(x)))

x = self.fc3(x)

return x高内聚低耦合,其他都不用改

# 创建模型

model = VGG()

# opt = paddle.optimizer.Adam(learning_rate=0.001, parameters=model.parameters())

opt = paddle.optimizer.Momentum(learning_rate=0.001, momentum=0.9, parameters=model.parameters())

# 启动训练过程

train_pm(model, opt)pytorch构建VGG

# 定义vgg网络

class VGG(torch.nn.Module):

def __init__(self):

super(VGG, self).__init__()

in_channels = [3, 64, 128, 256, 512, 512]

# 定义第一个卷积块,包含两个卷积

self.conv1_1 = Conv2d(in_channels=in_channels[0], out_channels=in_channels[1], kernel_size=3, padding=1, stride=1)

self.conv1_2 = Conv2d(in_channels=in_channels[1], out_channels=in_channels[1], kernel_size=3, padding=1, stride=1)

# 定义第二个卷积块,包含两个卷积

self.conv2_1 = Conv2d(in_channels=in_channels[1], out_channels=in_channels[2], kernel_size=3, padding=1,

stride=1)

self.conv2_2 = Conv2d(in_channels=in_channels[2], out_channels=in_channels[2], kernel_size=3, padding=1,

stride=1)

# 定义第三个卷积块,包含三个卷积

self.conv3_1 = Conv2d(in_channels=in_channels[2], out_channels=in_channels[3], kernel_size=3, padding=1,

stride=1)

self.conv3_2 = Conv2d(in_channels=in_channels[3], out_channels=in_channels[3], kernel_size=3, padding=1,

stride=1)

self.conv3_3 = Conv2d(in_channels=in_channels[3], out_channels=in_channels[3], kernel_size=3, padding=1,

stride=1)

# 定义第四个卷积块,包含三个卷积

self.conv4_1 = Conv2d(in_channels=in_channels[3], out_channels=in_channels[4], kernel_size=3, padding=1,

stride=1)

self.conv4_2 = Conv2d(in_channels=in_channels[4], out_channels=in_channels[4], kernel_size=3, padding=1,

stride=1)

self.conv4_3 = Conv2d(in_channels=in_channels[4], out_channels=in_channels[4], kernel_size=3, padding=1,

stride=1)

# 定义第五个卷积块,包含三个卷积

self.conv5_1 = Conv2d(in_channels=in_channels[4], out_channels=in_channels[5], kernel_size=3, padding=1,

stride=1)

self.conv5_2 = Conv2d(in_channels=in_channels[5], out_channels=in_channels[5], kernel_size=3, padding=1,

stride=1)

self.conv5_3 = Conv2d(in_channels=in_channels[5], out_channels=in_channels[5], kernel_size=3, padding=1,

stride=1)

# 使用Sequential 将卷积和relu组成一个线性结构(fc + relu)

# 当输入为224x224时,经过五个卷积块和池化层后,特征维度变为[512x7x7]

self.fc1 = torch.nn.Sequential(torch.nn.Linear(512 * 7 * 7, 4096), torch.nn.ReLU())

self.drop1_ratio = 0.5

self.dropout1 = torch.nn.Dropout(self.drop1_ratio)

# 使用Sequential 将卷积和relu组成一个线性结构(fc + relu)

self.fc2 = torch.nn.Sequential(torch.nn.Linear(4096, 4096), torch.nn.ReLU())

self.drop2_ratio = 0.5

self.dropout2 = torch.nn.Dropout(self.drop2_ratio)

self.fc3 = torch.nn.Linear(4096, 1)

self.relu = torch.nn.ReLU()

self.pool = MaxPool2d(stride=2, kernel_size=2)

def forward(self, x):

x = self.relu(self.conv1_1(x))

x = self.relu(self.conv1_2(x))

x = self.pool(x)

x = self.relu(self.conv2_1(x))

x = self.relu(self.conv2_2(x))

x = self.pool(x)

x = self.relu(self.conv3_1(x))

x = self.relu(self.conv3_2(x))

x = self.relu(self.conv3_3(x))

x = self.pool(x)

x = self.relu(self.conv4_1(x))

x = self.relu(self.conv4_2(x))

x = self.relu(self.conv4_3(x))

x = self.pool(x)

x = self.relu(self.conv5_1(x))

x = self.relu(self.conv5_2(x))

x = self.relu(self.conv5_3(x))

x = self.pool(x)

x = torch.flatten(x, 1, -1)

x = self.dropout1(self.relu(self.fc1(x)))

x = self.dropout2(self.relu(self.fc2(x)))

x = self.fc3(x)

return x

model = VGG()

# opt = paddle.optimizer.Adam(learning_rate=0.001, parameters=model.parameters())

opt = optim.SGD(lr=0.001, params=model.parameters(), momentum=0.9)

# 启动训练过程

train_pm(model, opt)GoogLeNet

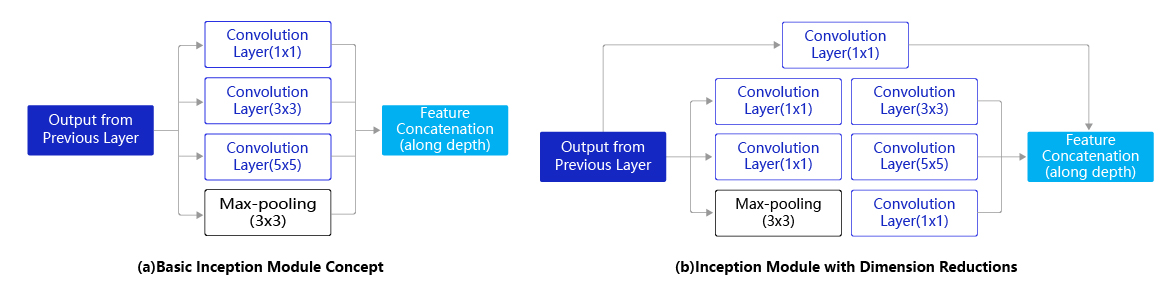

GoogLeNet是2014年ImageNet比赛的冠军,它的主要特点是网络不仅有深度,还在横向上具有“宽度”。由于图像信息在空间尺寸上的巨大差异,如何选择合适的卷积核来提取特征就显得比较困难了。空间分布范围更广的图像信息适合用较大的卷积核来提取其特征;而空间分布范围较小的图像信息则适合用较小的卷积核来提取其特征。为了解决这个问题,GoogLeNet提出了一种被称为Inception模块的方案。

Inception模块实现

# GoogLeNet模型代码

import numpy as np

import paddle

from paddle.nn import Conv2D, MaxPool2D, AdaptiveAvgPool2D, Linear

## 组网

import paddle.nn.functional as F

# 定义Inception块

class Inception(paddle.nn.Layer):

def __init__(self, c0, c1, c2, c3, c4, **kwargs):

'''

Inception模块的实现代码,

c1,图(b)中第一条支路1x1卷积的输出通道数,数据类型是整数

c2,图(b)中第二条支路卷积的输出通道数,数据类型是tuple或list,

其中c2[0]是1x1卷积的输出通道数,c2[1]是3x3

c3,图(b)中第三条支路卷积的输出通道数,数据类型是tuple或list,

其中c3[0]是1x1卷积的输出通道数,c3[1]是3x3

c4,图(b)中第一条支路1x1卷积的输出通道数,数据类型是整数

'''

super(Inception, self).__init__()

# 依次创建Inception块每条支路上使用到的操作

self.p1_1 = Conv2D(in_channels=c0,out_channels=c1, kernel_size=1)

self.p2_1 = Conv2D(in_channels=c0,out_channels=c2[0], kernel_size=1)

self.p2_2 = Conv2D(in_channels=c2[0],out_channels=c2[1], kernel_size=3, padding=1)

self.p3_1 = Conv2D(in_channels=c0,out_channels=c3[0], kernel_size=1)

self.p3_2 = Conv2D(in_channels=c3[0],out_channels=c3[1], kernel_size=5, padding=2)

self.p4_1 = MaxPool2D(kernel_size=3, stride=1, padding=1)

self.p4_2 = Conv2D(in_channels=c0,out_channels=c4, kernel_size=1)

def forward(self, x):

# 支路1只包含一个1x1卷积

p1 = F.relu(self.p1_1(x))

# 支路2包含 1x1卷积 + 3x3卷积

p2 = F.relu(self.p2_2(F.relu(self.p2_1(x))))

# 支路3包含 1x1卷积 + 5x5卷积

p3 = F.relu(self.p3_2(F.relu(self.p3_1(x))))

# 支路4包含 最大池化和1x1卷积

p4 = F.relu(self.p4_2(self.p4_1(x)))

# 将每个支路的输出特征图拼接在一起作为最终的输出结果

return paddle.concat([p1, p2, p3, p4], axis=1)简单来说Inception模块就是三种不同尺寸的卷积核卷积完后再concat起来,因为stride都是1,并且有各自的padding所以输出的图像尺寸是不变的

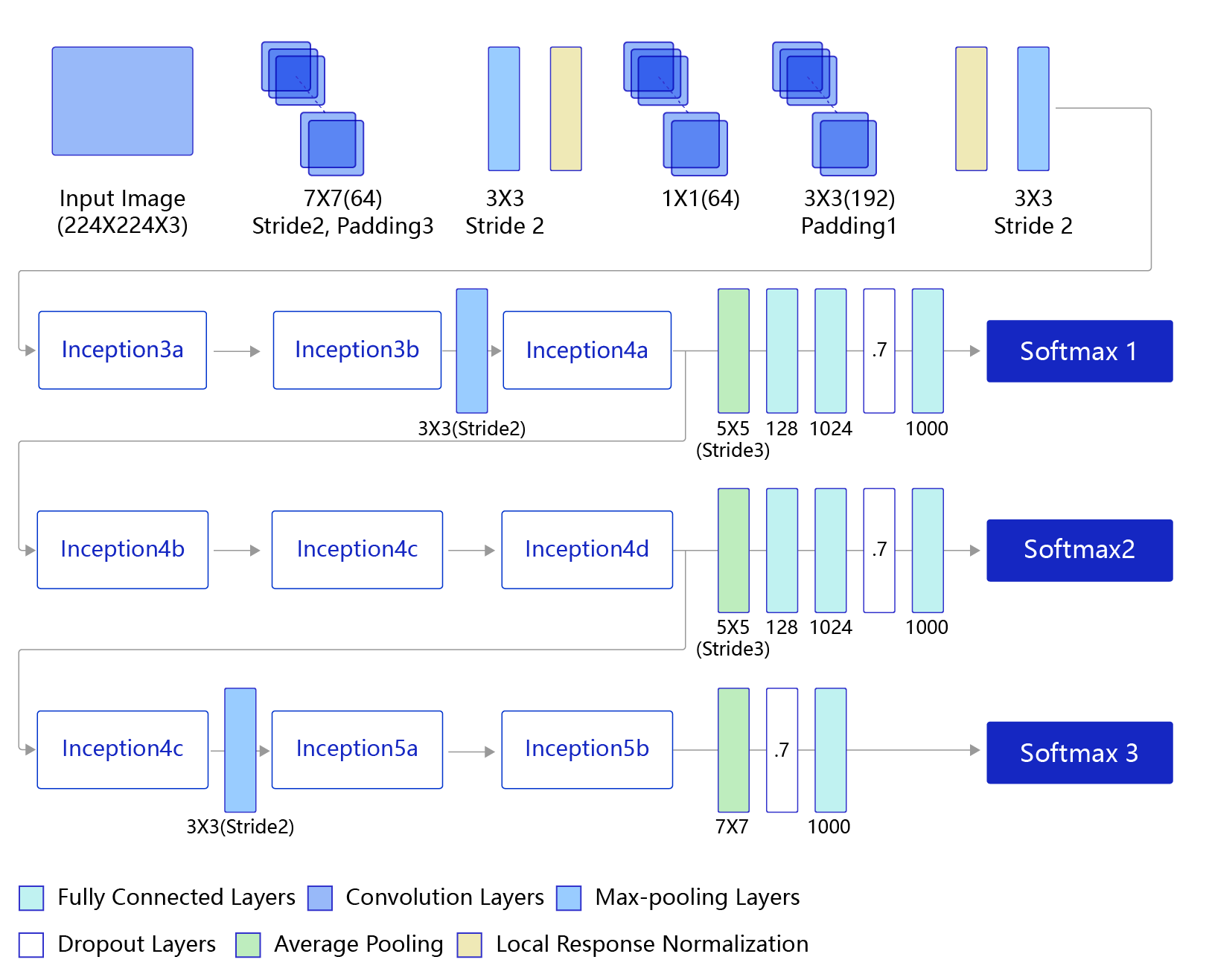

GoogLeNet的架构如图所示,在主体卷积部分中使用5个模块(block),每个模块之间使用步幅为2的3 ×3最大池化层来减小输出高宽。

- 第一模块使用一个64通道的7 × 7卷积层。

- 第二模块使用2个卷积层:首先是64通道的1 × 1卷积层,然后是将通道增大3倍的3 × 3卷积层。

- 第三模块串联2个完整的Inception块。

- 第四模块串联了5个Inception块。

- 第五模块串联了2 个Inception块。

- 第五模块的后面紧跟输出层,使用全局平均池化层来将每个通道的高和宽变成1,最后接上一个输出个数为标签类别数的全连接层。

说明: 在原作者的论文中添加了图中所示的softmax1和softmax2两个辅助分类器,如下图所示,训练时将三个分类器的损失函数进行加权求和,以缓解梯度消失现象。这里的程序作了简化,没有加入辅助分类器。

GoogLeNet具体实现

# GoogLeNet模型代码

import numpy as np

import paddle

from paddle.nn import Conv2D, MaxPool2D, AdaptiveAvgPool2D, Linear

## 组网

import paddle.nn.functional as F

# 定义Inception块

class Inception(paddle.nn.Layer):

def __init__(self, c0, c1, c2, c3, c4, **kwargs):

'''

Inception模块的实现代码,

c1,图(b)中第一条支路1x1卷积的输出通道数,数据类型是整数

c2,图(b)中第二条支路卷积的输出通道数,数据类型是tuple或list,

其中c2[0]是1x1卷积的输出通道数,c2[1]是3x3

c3,图(b)中第三条支路卷积的输出通道数,数据类型是tuple或list,

其中c3[0]是1x1卷积的输出通道数,c3[1]是3x3

c4,图(b)中第一条支路1x1卷积的输出通道数,数据类型是整数

'''

super(Inception, self).__init__()

# 依次创建Inception块每条支路上使用到的操作

self.p1_1 = Conv2D(in_channels=c0,out_channels=c1, kernel_size=1, stride=1)

self.p2_1 = Conv2D(in_channels=c0,out_channels=c2[0], kernel_size=1, stride=1)

self.p2_2 = Conv2D(in_channels=c2[0],out_channels=c2[1], kernel_size=3, padding=1, stride=1)

self.p3_1 = Conv2D(in_channels=c0,out_channels=c3[0], kernel_size=1, stride=1)

self.p3_2 = Conv2D(in_channels=c3[0],out_channels=c3[1], kernel_size=5, padding=2, stride=1)

self.p4_1 = MaxPool2D(kernel_size=3, stride=1, padding=1)

self.p4_2 = Conv2D(in_channels=c0,out_channels=c4, kernel_size=1, stride=1)

# # 新加一层batchnorm稳定收敛

# self.batchnorm = paddle.nn.BatchNorm2D(c1+c2[1]+c3[1]+c4)

def forward(self, x):

# 支路1只包含一个1x1卷积

p1 = F.relu(self.p1_1(x))

# 支路2包含 1x1卷积 + 3x3卷积

p2 = F.relu(self.p2_2(F.relu(self.p2_1(x))))

# 支路3包含 1x1卷积 + 5x5卷积

p3 = F.relu(self.p3_2(F.relu(self.p3_1(x))))

# 支路4包含 最大池化和1x1卷积

p4 = F.relu(self.p4_2(self.p4_1(x)))

# 将每个支路的输出特征图拼接在一起作为最终的输出结果

return paddle.concat([p1, p2, p3, p4], axis=1)

# return self.batchnorm()

class GoogLeNet(paddle.nn.Layer):

def __init__(self):

super(GoogLeNet, self).__init__()

# GoogLeNet包含五个模块,每个模块后面紧跟一个池化层

# 第一个模块包含1个卷积层

self.conv1 = Conv2D(in_channels=3,out_channels=64, kernel_size=7, padding=3, stride=1)

# 3x3最大池化

self.pool1 = MaxPool2D(kernel_size=3, stride=2, padding=1)

# 第二个模块包含2个卷积层

self.conv2_1 = Conv2D(in_channels=64,out_channels=64, kernel_size=1, stride=1)

self.conv2_2 = Conv2D(in_channels=64,out_channels=192, kernel_size=3, padding=1, stride=1)

# 3x3最大池化

self.pool2 = MaxPool2D(kernel_size=3, stride=2, padding=1)

# 第三个模块包含2个Inception块

self.block3_1 = Inception(192, 64, (96, 128), (16, 32), 32)

self.block3_2 = Inception(256, 128, (128, 192), (32, 96), 64)

# 3x3最大池化

self.pool3 = MaxPool2D(kernel_size=3, stride=2, padding=1)

# 第四个模块包含5个Inception块

self.block4_1 = Inception(480, 192, (96, 208), (16, 48), 64)

self.block4_2 = Inception(512, 160, (112, 224), (24, 64), 64)

self.block4_3 = Inception(512, 128, (128, 256), (24, 64), 64)

self.block4_4 = Inception(512, 112, (144, 288), (32, 64), 64)

self.block4_5 = Inception(528, 256, (160, 320), (32, 128), 128)

# 3x3最大池化

self.pool4 = MaxPool2D(kernel_size=3, stride=2, padding=1)

# 第五个模块包含2个Inception块

self.block5_1 = Inception(832, 256, (160, 320), (32, 128), 128)

self.block5_2 = Inception(832, 384, (192, 384), (48, 128), 128)

# 全局池化,尺寸用的是global_pooling,pool_stride不起作用

self.pool5 = AdaptiveAvgPool2D(output_size=1)

self.fc = Linear(in_features=1024, out_features=1)

def forward(self, x):

x = self.pool1(F.relu(self.conv1(x)))

x = self.pool2(F.relu(self.conv2_2(F.relu(self.conv2_1(x)))))

x = self.pool3(self.block3_2(self.block3_1(x)))

x = self.block4_3(self.block4_2(self.block4_1(x)))

x = self.pool4(self.block4_5(self.block4_4(x)))

x = self.pool5(self.block5_2(self.block5_1(x)))

x = paddle.reshape(x, [x.shape[0], -1])

x = self.fc(x)

return x

# 创建模型

model = GoogLeNet()

print(len(model.parameters()))

opt = paddle.optimizer.Momentum(learning_rate=0.001, momentum=0.9, parameters=model.parameters(), weight_decay=0.001)

# 启动训练过程

train_pm(model, opt)其实GoogLeNet的结构还是挺明白的,不管是卷积层还是组合的Inception层都不会改变图像尺寸大小而只是增加通道数,每次池化将图像大小减半,最后通过自适应平均池化层使每个通道变成1×1的size,再通过线性层得到最终输出。

同样使用pytorch来实现GoogLeNet网络。

# GoogLeNet模型代码

import numpy as np

import torch

from torch.nn import Conv2d, MaxPool2d, AdaptiveAvgPool2d, Linear

## 组网

import torch.nn.functional as F

# 定义Inception块

class Inception(torch.nn.Module):

def __init__(self, c0, c1, c2, c3, c4, **kwargs):

'''

Inception模块的实现代码,

c1,图(b)中第一条支路1x1卷积的输出通道数,数据类型是整数

c2,图(b)中第二条支路卷积的输出通道数,数据类型是tuple或list,

其中c2[0]是1x1卷积的输出通道数,c2[1]是3x3

c3,图(b)中第三条支路卷积的输出通道数,数据类型是tuple或list,

其中c3[0]是1x1卷积的输出通道数,c3[1]是3x3

c4,图(b)中第一条支路1x1卷积的输出通道数,数据类型是整数

'''

super(Inception, self).__init__()

# 依次创建Inception块每条支路上使用到的操作

self.p1_1 = Conv2d(in_channels=c0,out_channels=c1, kernel_size=1, stride=1)

self.p2_1 = Conv2d(in_channels=c0,out_channels=c2[0], kernel_size=1, stride=1)

self.p2_2 = Conv2d(in_channels=c2[0],out_channels=c2[1], kernel_size=3, padding=1, stride=1)

self.p3_1 = Conv2d(in_channels=c0,out_channels=c3[0], kernel_size=1, stride=1)

self.p3_2 = Conv2d(in_channels=c3[0],out_channels=c3[1], kernel_size=5, padding=2, stride=1)

self.p4_1 = MaxPool2d(kernel_size=3, stride=1, padding=1)

self.p4_2 = Conv2d(in_channels=c0,out_channels=c4, kernel_size=1, stride=1)

# # 新加一层batchnorm稳定收敛

# self.batchnorm = torch.nn.BatchNorm2d(c1+c2[1]+c3[1]+c4)

def forward(self, x):

# 支路1只包含一个1x1卷积

p1 = F.relu(self.p1_1(x))

# 支路2包含 1x1卷积 + 3x3卷积

p2 = F.relu(self.p2_2(F.relu(self.p2_1(x))))

# 支路3包含 1x1卷积 + 5x5卷积

p3 = F.relu(self.p3_2(F.relu(self.p3_1(x))))

# 支路4包含 最大池化和1x1卷积

p4 = F.relu(self.p4_2(self.p4_1(x)))

# 将每个支路的输出特征图拼接在一起作为最终的输出结果

return torch.concat([p1, p2, p3, p4], axis=1)

# return self.batchnorm()

class GoogLeNet(torch.nn.Module):

def __init__(self):

super(GoogLeNet, self).__init__()

# GoogLeNet包含五个模块,每个模块后面紧跟一个池化层

# 第一个模块包含1个卷积层

self.conv1 = Conv2d(in_channels=3,out_channels=64, kernel_size=7, padding=3, stride=1)

# 3x3最大池化

self.pool1 = MaxPool2d(kernel_size=3, stride=2, padding=1)

# 第二个模块包含2个卷积层

self.conv2_1 = Conv2d(in_channels=64,out_channels=64, kernel_size=1, stride=1)

self.conv2_2 = Conv2d(in_channels=64,out_channels=192, kernel_size=3, padding=1, stride=1)

# 3x3最大池化

self.pool2 = MaxPool2d(kernel_size=3, stride=2, padding=1)

# 第三个模块包含2个Inception块

self.block3_1 = Inception(192, 64, (96, 128), (16, 32), 32)

self.block3_2 = Inception(256, 128, (128, 192), (32, 96), 64)

# 3x3最大池化

self.pool3 = MaxPool2d(kernel_size=3, stride=2, padding=1)

# 第四个模块包含5个Inception块

self.block4_1 = Inception(480, 192, (96, 208), (16, 48), 64)

self.block4_2 = Inception(512, 160, (112, 224), (24, 64), 64)

self.block4_3 = Inception(512, 128, (128, 256), (24, 64), 64)

self.block4_4 = Inception(512, 112, (144, 288), (32, 64), 64)

self.block4_5 = Inception(528, 256, (160, 320), (32, 128), 128)

# 3x3最大池化

self.pool4 = MaxPool2d(kernel_size=3, stride=2, padding=1)

# 第五个模块包含2个Inception块

self.block5_1 = Inception(832, 256, (160, 320), (32, 128), 128)

self.block5_2 = Inception(832, 384, (192, 384), (48, 128), 128)

# 全局池化,尺寸用的是global_pooling,pool_stride不起作用

self.pool5 = AdaptiveAvgPool2d(output_size=1)

self.fc = Linear(in_features=1024, out_features=1)

def forward(self, x):

x = self.pool1(F.relu(self.conv1(x)))

x = self.pool2(F.relu(self.conv2_2(F.relu(self.conv2_1(x)))))

x = self.pool3(self.block3_2(self.block3_1(x)))

x = self.block4_3(self.block4_2(self.block4_1(x)))

x = self.pool4(self.block4_5(self.block4_4(x)))

x = self.pool5(self.block5_2(self.block5_1(x)))

x = torch.reshape(x, [x.shape[0], -1])

x = self.fc(x)

return x

# 创建模型

model = GoogLeNet()

#print(model.parameters)

opt = optim.SGD(lr=0.001, params=model.parameters(), momentum=0.9)

# 启动训练过程

train_pm(model, opt)总结一下把paddle模型转换成pytorch的步骤:

- paddle换成torch

- 网络继承类由paddle.nn.Layer改成torch.nn.Module

- Conv2D,MaxPool2D等改成Conv2d,MaxPool2d

- 优化器改成optim.SGD等等,参数都是缩写

- torch的GPU模式复杂一点,要加好几个cuda()

jupyter notebook的替换快捷键是ESC+F

ResNet

ResNet是2015年ImageNet比赛的冠军,将识别错误率降低到了3.6%,这个结果甚至超出了正常人眼识别的精度。

通过前面几个经典模型学习,我们可以发现随着深度学习的不断发展,模型的层数越来越多,网络结构也越来越复杂。那么是否加深网络结构,就一定会得到更好的效果呢?从理论上来说,假设新增加的层都是恒等映射,只要原有的层学出跟原模型一样的参数,那么深模型结构就能达到原模型结构的效果。换句话说,原模型的解只是新模型的解的子空间,在新模型解的空间里应该能找到比原模型解对应的子空间更好的结果。但是实践表明,增加网络的层数之后,训练误差往往不降反升。

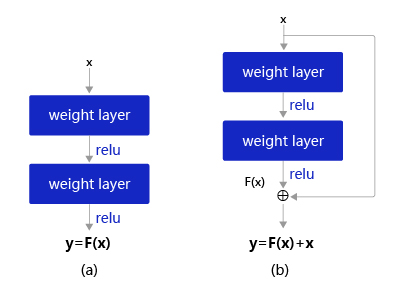

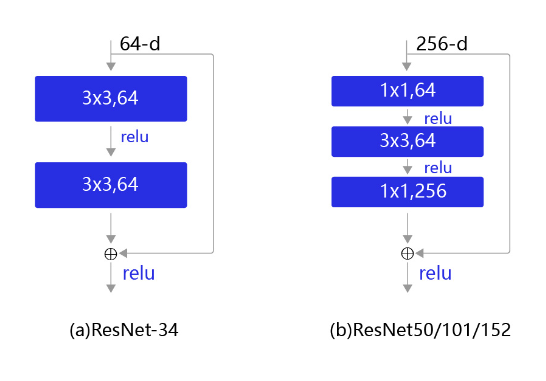

Kaiming He等人提出了残差网络ResNet来解决上述问题,其基本思想如图所示。

- 图6(a):表示增加网络的时候,将x映射成y=F(x)输出。

- 图6(b):对图6(a)作了改进,输出y=F(x)+x。这时不是直接学习输出特征yyy的表示,而是学习y−x。

- 如果想学习出原模型的表示,只需将F(x)的参数全部设置为0,则y=x是恒等映射。

- F(x)=y−x也叫做残差项,如果x→y的映射接近恒等映射,图6(b)中通过学习残差项也比图6(a)学习完整映射形式更加容易。

图6(b)的结构是残差网络的基础,这种结构也叫做残差块(Residual block)。输入xxx通过跨层连接,能更快的向前传播数据,或者向后传播梯度。通俗的比喻,在火热的电视节目《王牌对王牌》上有一个“传声筒”的游戏,排在队首的嘉宾把看到的影视片段表演给后面一个嘉宾看,经过四五个嘉宾后,最后一个嘉宾如果能表演出更多原剧的内容,就能取得高分。我们常常会发现刚开始的嘉宾往往表演出最多的信息(类似于Loss),而随着表演的传递,有效的表演信息越来越少(类似于梯度弥散)。如果每个嘉宾都能看到原始的影视片段,那么相信传声筒的效果会好很多。类似的,由于ResNet每层都存在直连的旁路,相当于每一层都和最终的损失有“直接对话”的机会,自然可以更好的解决梯度弥散的问题。残差块的具体设计方案如 图7 所示,这种设计方案也常称作瓶颈结构(BottleNeck)。1*1的卷积核可以非常方便的调整中间层的通道数,在进入3*3的卷积层之前减少通道数(256->64),经过该卷积层后再恢复通道数(64->256),可以显著减少网络的参数量。这个结构(256->64->256)像一个中间细,两头粗的瓶颈,所以被称为“BottleNeck”。

ResNet实现代码如下

# -*- coding:utf-8 -*-

# ResNet模型代码

import numpy as np

import paddle

import paddle.nn as nn

import paddle.nn.functional as F

# ResNet中使用了BatchNorm层,在卷积层的后面加上BatchNorm以提升数值稳定性

# 定义卷积批归一化块

class ConvBNLayer(paddle.nn.Layer):

def __init__(self,

num_channels,

num_filters,

filter_size,

stride=1,

groups=1,

act=None):

"""

num_channels, 卷积层的输入通道数

num_filters, 卷积层的输出通道数

stride, 卷积层的步幅

groups, 分组卷积的组数,默认groups=1不使用分组卷积

"""

super(ConvBNLayer, self).__init__()

# 创建卷积层

self._conv = nn.Conv2D(

in_channels=num_channels,

out_channels=num_filters,

kernel_size=filter_size,

stride=stride,

padding=(filter_size - 1) // 2,

groups=groups,

bias_attr=False)

# 创建BatchNorm层

self._batch_norm = paddle.nn.BatchNorm2D(num_filters)

self.act = act

def forward(self, inputs):

y = self._conv(inputs)

y = self._batch_norm(y)

if self.act == 'leaky':

y = F.leaky_relu(x=out, negative_slope=0.1)

elif self.act == 'relu':

y = F.relu(x=y)

return y

# 定义残差块

# 每个残差块会对输入图片做三次卷积,然后跟输入图片进行短接

# 如果残差块中第三次卷积输出特征图的形状与输入不一致,则对输入图片做1x1卷积,将其输出形状调整成一致

class BottleneckBlock(paddle.nn.Layer):

def __init__(self,

num_channels,

num_filters,

stride,

shortcut=True):

super(BottleneckBlock, self).__init__()

# 创建第一个卷积层 1x1

self.conv0 = ConvBNLayer(

num_channels=num_channels,

num_filters=num_filters,

filter_size=1,

act='relu')

# 创建第二个卷积层 3x3

self.conv1 = ConvBNLayer(

num_channels=num_filters,

num_filters=num_filters,

filter_size=3,

stride=stride,

act='relu')

# 创建第三个卷积 1x1,但输出通道数乘以4

self.conv2 = ConvBNLayer(

num_channels=num_filters,

num_filters=num_filters * 4,

filter_size=1,

act=None)

# 如果conv2的输出跟此残差块的输入数据形状一致,则shortcut=True

# 否则shortcut = False,添加1个1x1的卷积作用在输入数据上,使其形状变成跟conv2一致

if not shortcut:

self.short = ConvBNLayer(

num_channels=num_channels,

num_filters=num_filters * 4,

filter_size=1,

stride=stride)

self.shortcut = shortcut

self._num_channels_out = num_filters * 4

def forward(self, inputs):

y = self.conv0(inputs)

conv1 = self.conv1(y)

conv2 = self.conv2(conv1)

# 如果shortcut=True,直接将inputs跟conv2的输出相加

# 否则需要对inputs进行一次卷积,将形状调整成跟conv2输出一致

if self.shortcut:

short = inputs

else:

short = self.short(inputs)

y = paddle.add(x=short, y=conv2)

y = F.relu(y)

return y

# 定义ResNet模型

class ResNet(paddle.nn.Layer):

def __init__(self, layers=50, class_dim=1):

"""

layers, 网络层数,可以是50, 101或者152

class_dim,分类标签的类别数

"""

super(ResNet, self).__init__()

self.layers = layers

supported_layers = [50, 101, 152]

assert layers in supported_layers, \

"supported layers are {} but input layer is {}".format(supported_layers, layers)

if layers == 50:

#ResNet50包含多个模块,其中第2到第5个模块分别包含3、4、6、3个残差块

depth = [3, 4, 6, 3]

elif layers == 101:

#ResNet101包含多个模块,其中第2到第5个模块分别包含3、4、23、3个残差块

depth = [3, 4, 23, 3]

elif layers == 152:

#ResNet152包含多个模块,其中第2到第5个模块分别包含3、8、36、3个残差块

depth = [3, 8, 36, 3]

# 残差块中使用到的卷积的输出通道数

num_filters = [64, 128, 256, 512]

# ResNet的第一个模块,包含1个7x7卷积,后面跟着1个最大池化层

self.conv = ConvBNLayer(

num_channels=3,

num_filters=64,

filter_size=7,

stride=2,

act='relu')

self.pool2d_max = nn.MaxPool2D(

kernel_size=3,

stride=2,

padding=1)

# ResNet的第二到第五个模块c2、c3、c4、c5

self.bottleneck_block_list = []

num_channels = 64

for block in range(len(depth)):

shortcut = False

for i in range(depth[block]):

bottleneck_block = self.add_sublayer(

'bb_%d_%d' % (block, i),

BottleneckBlock(

num_channels=num_channels,

num_filters=num_filters[block],

stride=2 if i == 0 and block != 0 else 1, # c3、c4、c5将会在第一个残差块使用stride=2;其余所有残差块stride=1

shortcut=shortcut))

num_channels = bottleneck_block._num_channels_out

self.bottleneck_block_list.append(bottleneck_block)

shortcut = True

# 在c5的输出特征图上使用全局池化

self.pool2d_avg = paddle.nn.AdaptiveAvgPool2D(output_size=1)

# stdv用来作为全连接层随机初始化参数的方差

import math

stdv = 1.0 / math.sqrt(2048 * 1.0)

# 创建全连接层,输出大小为类别数目,经过残差网络的卷积和全局池化后,

# 卷积特征的维度是[B,2048,1,1],故最后一层全连接的输入维度是2048

self.out = nn.Linear(in_features=2048, out_features=class_dim,

weight_attr=paddle.ParamAttr(

initializer=paddle.nn.initializer.Uniform(-stdv, stdv)))

def forward(self, inputs):

y = self.conv(inputs)

y = self.pool2d_max(y)

for bottleneck_block in self.bottleneck_block_list:

y = bottleneck_block(y)

y = self.pool2d_avg(y)

y = paddle.reshape(y, [y.shape[0], -1])

y = self.out(y)

return y

# 创建模型

model = ResNet()

# 定义优化器

opt = paddle.optimizer.Momentum(learning_rate=0.001, momentum=0.9, parameters=model.parameters(), weight_decay=0.001)

# 启动训练过程

train_pm(model, opt)研究一下实现的代码,首先定义了ConvBNLayer,其实就是卷积核加了个归一化

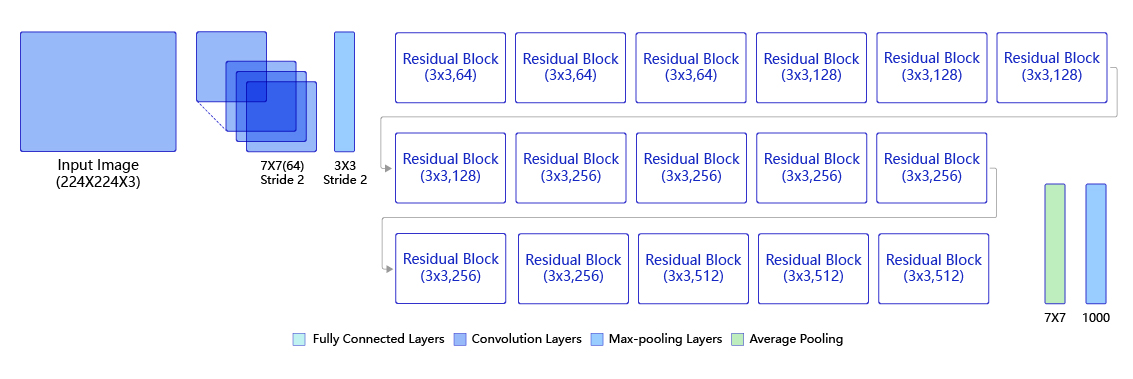

之后定义BottleneckBlock,看代码就明白什么叫残差了,输出是卷积的输出与输入相加(注意如果size不一样要加short层1×1卷积增加四倍通道数,就是每一模块第一个残差块,输出通道会四倍,之后每个残差块输入输出通道数不变)

每一BottleneckBlock模块的channels就是num_filter的四倍,第一模块stride都是1所以size不变,其后每模块的第一个残差块的stride=2,size会减半

打印一下每层输出的size与我们手动计算的对比一下,没有错误。

x = np.random.randn(*[1,3,224,224])

x = x.astype('float32')

x = paddle.to_tensor(x)

m = ResNet()

y = m.conv(x)

print(y.shape)

y = m.pool2d_max(y)

x = np.random.randn(*[1,3,224,224])

x = x.astype('float32')

x = paddle.to_tensor(x)

m = ResNet()

y = m.conv(x)

print(y.shape)

y = m.pool2d_max(y)

for bottleneck_block in m.bottleneck_block_list:

y = bottleneck_block(y)

print(y.shape)

y = m.pool2d_avg(y)

print(y.shape)

y = paddle.reshape(y, [y.shape[0], -1])

print(y.shape)

y = m.out(y)

print(y.shape)

"""-----------------------输出------------------------"""

[1, 64, 112, 112]

[1, 256, 56, 56]

[1, 256, 56, 56]

[1, 256, 56, 56]

[1, 512, 28, 28]

[1, 512, 28, 28]

[1, 512, 28, 28]

[1, 512, 28, 28]

[1, 1024, 14, 14]

[1, 1024, 14, 14]

[1, 1024, 14, 14]

[1, 1024, 14, 14]

[1, 1024, 14, 14]

[1, 1024, 14, 14]

[1, 2048, 7, 7]

[1, 2048, 7, 7]

[1, 2048, 7, 7]

[1, 2048, 1, 1]

[1, 2048]

[1, 1]接着使用torch来实现

# -*- coding:utf-8 -*-

# ResNet模型代码

import numpy as np

import torch

import torch.nn as nn

import torch.nn.functional as F

# ResNet中使用了BatchNorm层,在卷积层的后面加上BatchNorm以提升数值稳定性

# 定义卷积批归一化块

class ConvBNLayer(torch.nn.Module):

def __init__(self,

num_channels,

num_filters,

filter_size,

stride=1,

groups=1,

act=None):

"""

num_channels, 卷积层的输入通道数

num_filters, 卷积层的输出通道数

stride, 卷积层的步幅

groups, 分组卷积的组数,默认groups=1不使用分组卷积

"""

super(ConvBNLayer, self).__init__()

# 创建卷积层

self._conv = nn.Conv2d(

in_channels=num_channels,

out_channels=num_filters,

kernel_size=filter_size,

stride=stride,

padding=(filter_size - 1) // 2,

groups=groups,).cuda()

# 创建BatchNorm层

self._batch_norm = torch.nn.BatchNorm2d(num_filters).cuda()

self.act = act

def forward(self, inputs):

y = self._conv(inputs)

y = self._batch_norm(y)

if self.act == 'leaky':

y = F.leaky_relu(y, negative_slope=0.1)

elif self.act == 'relu':

y = F.relu(y)

return y

# 定义残差块

# 每个残差块会对输入图片做三次卷积,然后跟输入图片进行短接

# 如果残差块中第三次卷积输出特征图的形状与输入不一致,则对输入图片做1x1卷积,将其输出形状调整成一致

class BottleneckBlock(torch.nn.Module):

def __init__(self,

num_channels,

num_filters,

stride,

shortcut=True):

super(BottleneckBlock, self).__init__()

# 创建第一个卷积层 1x1

self.conv0 = ConvBNLayer(

num_channels=num_channels,

num_filters=num_filters,

filter_size=1,

act='relu')

# 创建第二个卷积层 3x3

self.conv1 = ConvBNLayer(

num_channels=num_filters,

num_filters=num_filters,

filter_size=3,

stride=stride,

act='relu')

# 创建第三个卷积 1x1,但输出通道数乘以4

self.conv2 = ConvBNLayer(

num_channels=num_filters,

num_filters=num_filters * 4,

filter_size=1,

act=None)

# 如果conv2的输出跟此残差块的输入数据形状一致,则shortcut=True

# 否则shortcut = False,添加1个1x1的卷积作用在输入数据上,使其形状变成跟conv2一致

if not shortcut:

self.short = ConvBNLayer(

num_channels=num_channels,

num_filters=num_filters * 4,

filter_size=1,

stride=stride)

self.shortcut = shortcut

self._num_channels_out = num_filters * 4

def forward(self, inputs):

y = self.conv0(inputs)

conv1 = self.conv1(y)

conv2 = self.conv2(conv1)

# 如果shortcut=True,直接将inputs跟conv2的输出相加

# 否则需要对inputs进行一次卷积,将形状调整成跟conv2输出一致

if self.shortcut:

short = inputs

else:

short = self.short(inputs)

y = torch.add(short, conv2)

y = F.relu(y)

return y

# 定义ResNet模型

class ResNet(torch.nn.Module):

def __init__(self, layers=50, class_dim=1):

"""

layers, 网络层数,可以是50, 101或者152

class_dim,分类标签的类别数

"""

super(ResNet, self).__init__()

self.layers = layers

supported_layers = [50, 101, 152]

assert layers in supported_layers, \

"supported layers are {} but input layer is {}".format(supported_layers, layers)

if layers == 50:

#ResNet50包含多个模块,其中第2到第5个模块分别包含3、4、6、3个残差块

depth = [3, 4, 6, 3]

elif layers == 101:

#ResNet101包含多个模块,其中第2到第5个模块分别包含3、4、23、3个残差块

depth = [3, 4, 23, 3]

elif layers == 152:

#ResNet152包含多个模块,其中第2到第5个模块分别包含3、8、36、3个残差块

depth = [3, 8, 36, 3]

# 残差块中使用到的卷积的输出通道数

num_filters = [64, 128, 256, 512]

# ResNet的第一个模块,包含1个7x7卷积,后面跟着1个最大池化层

self.conv = ConvBNLayer(

num_channels=3,

num_filters=64,

filter_size=7,

stride=2,

act='relu')

self.pool2d_max = nn.MaxPool2d(

kernel_size=3,

stride=2,

padding=1)

# ResNet的第二到第五个模块c2、c3、c4、c5

self.bottleneck_block_list = []

num_channels = 64

for block in range(len(depth)):

shortcut = False

for i in range(depth[block]):

self.bottleneck_block_list.append(

BottleneckBlock(

num_channels=num_channels,

num_filters=num_filters[block],

stride=2 if i == 0 and block != 0 else 1, # c3、c4、c5将会在第一个残差块使用stride=2;其余所有残差块stride=1

shortcut=shortcut))

num_channels = self.bottleneck_block_list[-1]._num_channels_out

shortcut = True

# 在c5的输出特征图上使用全局池化

self.pool2d_avg = torch.nn.AdaptiveAvgPool2d(output_size=1)

self.bn1=nn.BatchNorm1d(2048)

self.out = nn.Linear(in_features=2048, out_features=class_dim)

def forward(self, inputs):

y = self.conv(inputs)

y = self.pool2d_max(y)

for bottleneck_block in self.bottleneck_block_list:

y = bottleneck_block(y)

y = self.pool2d_avg(y)

y = torch.reshape(y, [y.shape[0], -1])

y = self.bn1(y)

y = self.out(y)

return y实现的时候还是遇到不少问题,api不一样,torch没有add_sublayer这个api,我就直接用append代替了,另外torch不允许y = F.relu(x=y)这种括号里赋值的写法,除此之外定义的conv和batchnorm层都要加.cuda()。最后paddle应该是线性层的参数正则化,torch的实现还没搞明白,写的时候以为是batchnorm,但想了想不一样,batchnorm还是把这个batch到这层的输入给归一化的,不是对参数进行限制。

Comments NOTHING